Menu

|

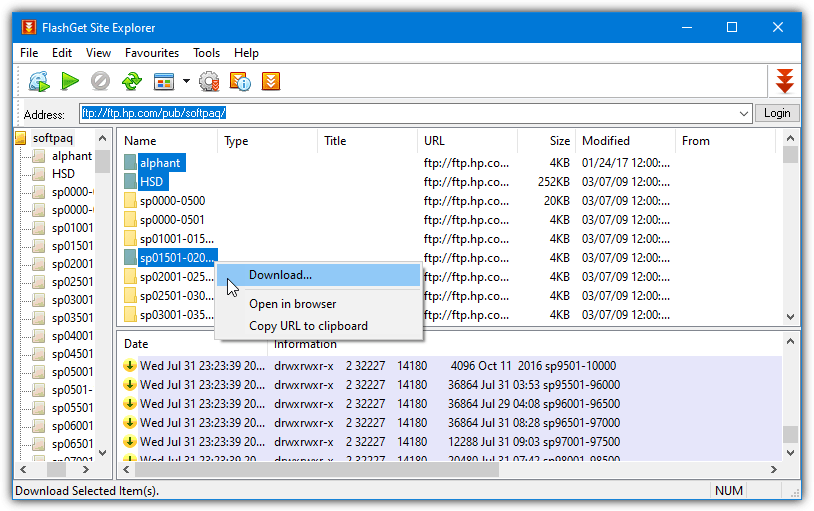

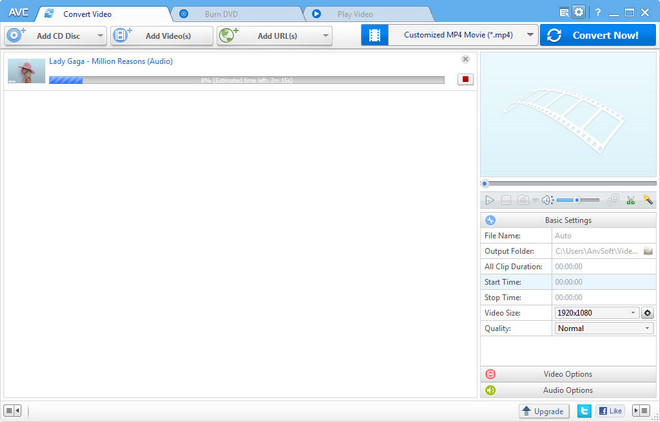

Batch file free download - Batch File Renamer, Batch File Rename, Quick Batch File Compiler, and many more programs. With a batch file, you save all the commands into one file, and just run the batch file, instead of your gazillion commands individually. I was facing the same situation in Mac OSX when I realised that I didn’t know how to create a batch file in Mac OSX. Turns out it’s pretty easy. Click Add URL(s) button available from the Manager window; Fill in URL, renaming mask, download folder and optionally a referrer. Modify your URL to use batch descriptors as described below. Feel free to add even more batch descriptors, but keep in mind that downloading huge ammounts of files will slow down DownThemAll! And overall performance. The -N switch tells it to skip the download if the file has already been downloaded and is up-to-date (based on time-stamping). Next, copy and paste that column into notepad and save it with a.bat file extension. Download wget.exe and put it in the same folder as the batch file. Double-click the batch file to run it and wait for the images to.

To begin with you are no longer in Redmond, WA. You are now in the land of Unix, and Windows .bat files are for the most part nothing like a Unix shell scripts. There maybe a tiny bit of overlap, but not much.

You actually might find it better to use Applications -> Automator to do what you want. Use the 'New Folder' action.

Or if you must, the 'Run Shell Script' action.

If you are going to proceed with a shell script, even one that is from from Automator 'Run Shell Script', then a comment starts with a # (US keyboard pound sign). The # can be at the beginning of the line, or it can be the last thing on a command line. The comment continues until the end-of-the-line.

Upper and lowercase matters. That is to say, everything in a shell script is case sensitive. So 'CDATE' is a different variable from 'cdate', and 'Cdate' is different, as well as 'CDate', etc..

'md' (make dir) in Unix is 'mkdir'. For more information about 'mkdir', from an Applications -> Utilities -> Terminal session, enter the command:

man mkdir

Or just Google 'mkdir'

I do not really understand the .bat FOR command, so all I can tell you is that for a shell script there is a command called 'for' but that is totally where the similarities end. Oh yea, 'for' is lowercase ONLY. If you want information on the 'bash' 'for' command, then

man bash

Or maybe some Googling for creating bash 'for' loops

Getting the current date information would be done using the 'date' command, and you can get information on 'date' from:

man date

For example, this command

CDATE='$(date +%Y-%m-%d)'

will put '2017-12-28' into the ALL UPPERCASE variable 'CDATE' (the above command does NOT have any spaces between CDATE the = and the '..'. If you add spaces the assignment will not work.

The various % codes can be found in

man strftime

And you would substitute the contents of 'CDATE' with a ${CDATE}

mkdir -p '- History/${CDATE} project directory created'

If -<space> has special meaning for 'md', it is meaningless for the Unix 'mkdir' command. The Folder created by this command will be:

'Your Home Folder' -> '- History' -> '2017-12-28 project directory created' https://pointsbrown510.weebly.com/blog/download-latest-mac-os-to-usb.

where the folder '- History' will be created in your Home folder, and '2017-12-28 project directory created' will be a subfolder under '- History'.

If this is NOT what you want, then you will need to modify the various 'md' commands to follow Unix 'mkdir' syntax.

If you want to get deeper into Unix 'bash' shell scripting, then Google 'macos bash script basics', and you will find some introductory guides.

Dec 28, 2017 12:17 PM

The

curl tool lets us fetch a given URL from the command-line. Sometimes we want to save a web file to our own computer. Other times we might pipe it directly into another program. Either way, curl has us covered.

See its documentation here.

This is the basic usage of

curl:

That

--output flag denotes the filename (some.file) of the downloaded URL (http://some.url)

Let's try it with a basic website address:

Besides the display of a progress indicator (which I explain below), you don't have much indication of what

curl actually downloaded. So let's confirm that a file named my.file was actually downloaded.

Using the

ls command will show the contents of the directory:

Which outputs:

And if you use

cat to output the contents of my.file, like so:

– you will the HTML that powers

http://example.com

I thought Unix was supposed to be quiet?

Let's back up a bit: when you first ran the

curl command, you might have seen a quick blip of a progress indicator:

If you remember the Basics of the Unix Philosophy, one of the tenets is:

Rule of Silence: When a program has nothing surprising to say, it should say nothing.

In the example of

curl, the author apparently believes that it's important to tell the user the progress of the download. For a very small file, that status display is not terribly helpful. Let's try it with a bigger file (this is the baby names file from the Social Security Administration) to see how the progress indicator animates:

Quick note: If you're new to the command-line, you're probably used to commands executing every time you hit Enter. In this case, the command is so long (because of the URL) that I broke it down into two lines with the use of the backslash, i.e.

This is solely to make it easier for you to read. As far as the computer cares, it just joins the two lines together as if that backslash weren't there and runs it as one command.

Make curl silent

The

curl progress indicator is a nice affordance, but let's just see if we get curl to act like all of our Unix tools. In curl's documentation of options, there is an option for silence:

-s, --silent

Silent or quiet mode. Don't show progress meter or error messages. Makes Curl mute. It will still output the data you ask for, potentially even to the terminal/stdout unless you redirect it.

Try it out:

Repeat and break things

So those are the basics for the

curl command. There are many, many more options, but for now, we know how to use curl to do something that is actually quite powerful: fetch a file, anywhere on the Internet, from the simple confines of our command-line.

Before we go further, though, let's look at the various ways this simple command can be re-written and, more crucially, screwed up:

Shortened options

As you might have noticed in the

--silent documentation, it lists the alternative form of -s. Many options for many tools have a shortened alias. In fact, --output can be shortened to -o

Now watch out: the number of hyphens is not something you can mess up on; the following commands would cause an error or other unexpected behavior:

Also, mind the position of

my.file, which can be thought of as the argument to the -ooption. The argumentmust follow after the -o…because curl.

If you instead executed this:

How would

curl know that my.file, and not -s is the argument, i.e. what you want to name the content of the downloaded URL?

In fact, you might see that you've created a file named

-s…which is not the end of the world, but not something you want to happen unwittingly.

Order of options

By and large (from what I can think of at the top of my head), the order of the options doesn't matter:

In fact, the URL,

http://example.com, can be placed anywhere in the mix:

Download File From Url

A couple of things to note:

And you will have a problem.

No options at all

The last thing to consider is what happens when you just

curl for a URL with no options (which, after all, should be optional). Before you try it, think about another part of the Unix philosophy:

This is the Unix philosophy: Write programs that do one thing and do it well. Write programs to work together. Write programs to handle text streams, because that is a universal interface.

If you

curl without any options except for the URL, the content of the URL (whether it's a webpage, or a binary file, such as an image or a zip file) will be printed out to screen. Try it:

Batch Download File From Url

Output:

Even with the small amount of HTML code that makes up the http://example.com webpage, it's too much for human eyes to process (and reading raw HTML wasn't meant for humans).

Batch File Download From Url MacStandard output and connecting programs

But what if we wanted to send the contents of a web file to another program? Maybe to

wc, which is used to count words and lines? Then we can use the powerful Unix feature of pipes. In this example, I'm using curl's silent option so that only the output of wc (and not the progress indicator) is seen. Also, I'm using the -l option for wc to just get the number of lines in the HTML for example.com:

Batch Download Files From Website

Number of lines in

example.com is: 50

Now, you could've also done the same in two lines:

But not only is that less elegant, it also requires creating a new file called

temp.file. Now, this is a trivial concern, but someday, you may work with systems and data flows in which temporarily saving a file is not an available luxury (think of massive files).

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2020

Categories |

RSS Feed

RSS Feed